La semaine prochaine déjà se tient CHAR(11), la conférence spécialisée sur le Clustering, la Haute Disponibilité et la Réplication avec PostgreSQL. C’est en Europe, à Cambridge cette fois, et c’est en anglais même si plusieurs compatriotes seront dans l’assistance.

Si vous n’avez pas encore jeté un œil au programme, je vous encourage à le faire. Même si vous n’aviez pas prévu de venir… parce qu’il y a de quoi vous faire changer d’avis !

While Magnus is all about PG Conf EU already, you have to realize we’re just landed back from PG Con in Ottawa. My next stop in the annual conferences is CHAR 11, the Clustering, High Availability and Replication conference in Cambridge, 11-12 July. Yes, on the old continent this time.

This year’s pgcon hot topics, for me, have been centered around a better grasp at SSI and DDL Triggers. Having those beasts in PostgreSQL would allow for auditing, finer privileges management and some more automated replication facilities.

I’ve been working on skytools3 packaging lately. I’ve been pushing quite a lot of work into it, in order to have exactly what I needed out of the box, after some 3 years of production and experiences with the products. Plural, yes, because even if pgbouncer and plproxy are siblings to the projets (same developers team, separate life cycle and releases), then skytools still includes several sub-projects.

Here’s what the skytools3 packaging is going to look like:

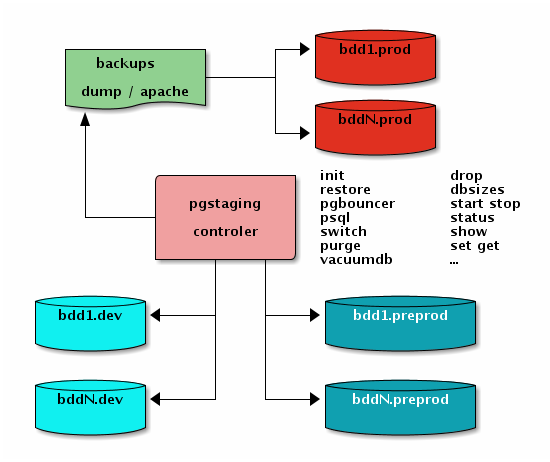

If you don’t remember about what pg_staging is all about, it’s a central console from where to control all your PostgreSQL databases. Typically you use it to manage your development and pre-production setup, where developers ask you pretty often to install them some newer dump from the production, and you want that operation streamlined and easy.

Usage The typical session would be something like this:

pg_staging> databases foodb.dev foodb foodb_20100824 :5432 foodb_20100209 foodb_20100209 :5432 foodb_20100824 foodb_20100824 :5432 pgbouncer pgbouncer :6432 postgres postgres :5432 pg_staging> dbsizes foodb.

Hannu just gave me a good idea in this email on -hackers, proposing that pg_basebackup should get the xlog files again and again in a loop for the whole duration of the base backup. That’s now done in the aforementioned tool, whose options got a little more useful now:

Usage: pg_basebackup.py [-v] [-f] [-j jobs] "dsn" dest Options: -h, --help show this help message and exit --version show version and quit -x, --pg_xlog backup the pg_xlog files -v, --verbose be verbose and about processing progress -d, --debug show debug information, including SQL queries -f, --force remove destination directory if it exists -j JOBS, --jobs=JOBS how many helper jobs to launch -D DELAY, --delay=DELAY pg_xlog subprocess loop delay, see -x -S, --slave auxilliary process --stdin get list of files to backup from stdin Yeah, as implementing the xlog idea required having some kind of parallelism, I built on it and the script now has a --jobs option for you to setup how many processes to launch in parallel, all fetching some base backup files in its own standard ( libpq) PostgreSQL connection, in compressed chunks of 8 MB (so that’s not 8 MB chunks sent over).